LLM visibility tracking tools help enterprises understand, monitor, control, and improve how their brand appears in AI-generated answers across platforms such as ChatGPT, Gemini, Perplexity, and Google AI Overviews. These tools show whether AI models mention a brand, how they describe it, and which sources they trust when recommending businesses.

Summary

AI discovery has become a primary way customers find and choose businesses. According to McKinsey, 50% of consumers already use AI search to inform their buying decisions, underscoring how quickly this shift is reshaping customer behavior. AI-generated answers typically surface only a handful of brands, selected based on accuracy, trust, and consistency. For multi-location enterprises, this shift has redefined visibility. Managing a few listings or tracking rankings is no longer enough. Every location must be accurately represented, consistently described, and backed by strong trust signals across the data sources LLM models rely on to generate answers.

This blog reviews the best LLM visibility tracking tools for multi-location enterprises in 2026, with a focus on platforms that support governance, automation, and execution across locations.

Table of contents

- Why basic LLM visibility tracking isn’t enough for multi-location enterprises

- What to look for in LLM visibility tracking tools for enterprises

- Which AI engines and discovery surfaces enterprises must monitor

- 7 Best LLM visibility tracking tools for multi-location enterprises in 2026

- FAQs about LLM visibility tracking tools

- Turning LLM visibility into an enterprise advantage with Birdeye

Why basic LLM visibility tracking isn’t enough for multi-location enterprises

For brands with dozens, hundreds, or thousands of locations, AI visibility isn’t just about detecting mentions. It’s about controlling the accuracy and consistency of the signals AI models use when recommending brands to potential customers, an increasingly important part of answer engine optimization (AEO).

What is Answer Engine Optimization?

Answer Engine Optimization (AEO) focuses on ensuring brands appear accurately and consistently within AI-generated answers. Unlike traditional SEO, which targets rankings and clicks, AEO is about how large language models interpret, trust, and recommend businesses when responding to user questions.

Traditional LLM visibility tools focus on monitoring mentions and citations. That approach breaks down at enterprise scale because:

- AI answers vary by market when listings, reviews, and structured data are inconsistent

- Monitoring reveals problems, but doesn’t resolve them

- Manual fixes across hundreds of locations are slow and error-prone

- Insights that can’t trigger action remain unused

These limitations make one thing clear: multi-location enterprises need more than reporting. They need a unified system that connects visibility insights directly to execution, so every location stays accurate, trusted, and eligible for recommendation across AI discovery platforms.

What to look for in LLM visibility tracking tools for enterprises

Enterprise teams evaluating LLM visibility tracking tools need more than monitoring dashboards. They need platforms that support governance, market-level reporting, and cross-functional execution across every single location.

Before comparing vendors, it’s important to align on the capabilities that matter for multi-location organizations:

Enterprise evaluation criteria:

- Market and location segmentation: Map AI prompts and results to specific regions, designated market areas (DMAs), and location groups. Brand-level summaries alone are not enough for large, multi-location enterprises.

- Prompt governance: Manage shared prompt libraries with clear ownership, approval processes, and version control. This ensures consistency and prevents uncontrolled changes.

- Evidence retention: Store AI-generated answers and their citation sources. Teams need this data for review, exporting, and audit purposes.

- Citation visibility: Determine whether LLMs rely on first-party sources, third-party websites, or both when generating brand-related answers.

- Coverage clarity: Understand exactly which AI engines and answer surfaces are tracked. Teams need to know exactly what is and is not covered.

- Operational workflows: Route issues to the teams responsible for fixing listings, pages, or data sources.

- Enterprise security: Enforce role-based access, audit logs, and enterprise-grade authentication to meet security and compliance standards.

Once enterprises know which capabilities matter, the next question is where those capabilities should be applied. AI visibility is only meaningful if it reflects the actual AI engines and discovery surfaces customers use to find brands today.

Which AI engines and discovery surfaces enterprises must monitor

Enterprise brands appear in multiple AI platforms, each with its own data sources, refresh cycles, and regional behavior. Effective LLM visibility tracking starts with understanding where potential customers actually encounter AI answers.

In 2026, the most important AI engines and discovery surfaces include:

- ChatGPT and custom GPTs, which increasingly shape early-stage brand consideration and service discovery

- Google AI Overviews, where AI-generated answers appear directly in search results and replace traditional listings

- Gemini, which powers AI responses across Google Search, Maps, and Android experiences

- Perplexity, which combines conversational answers with explicit citations that often favor authoritative first-party sources

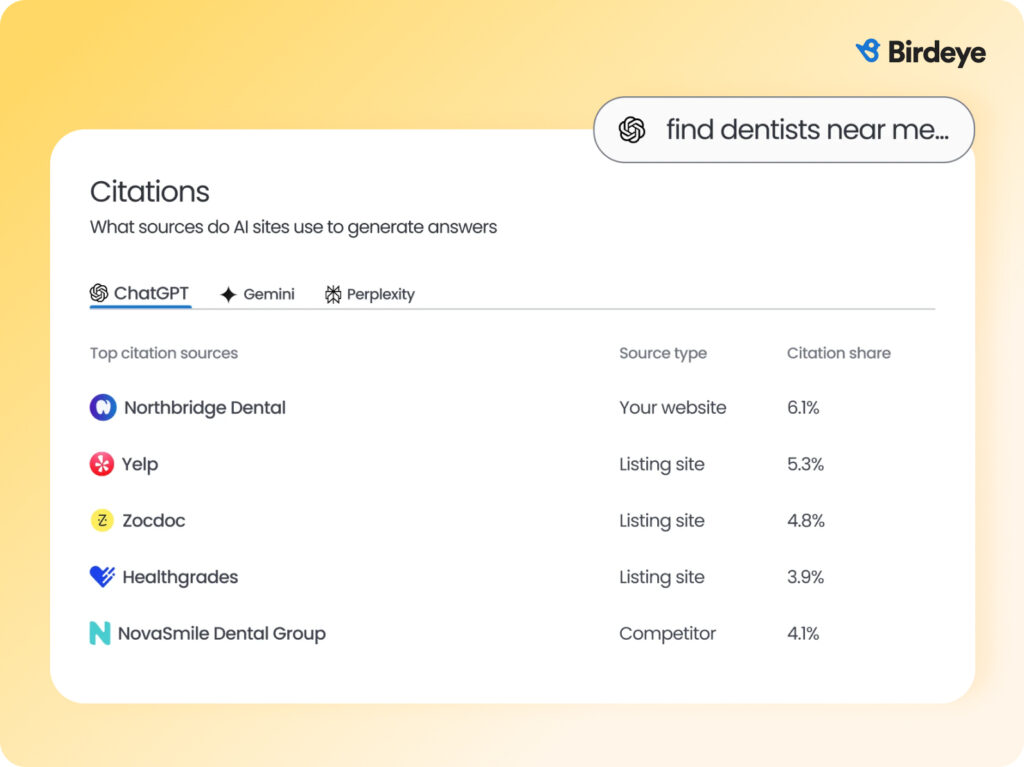

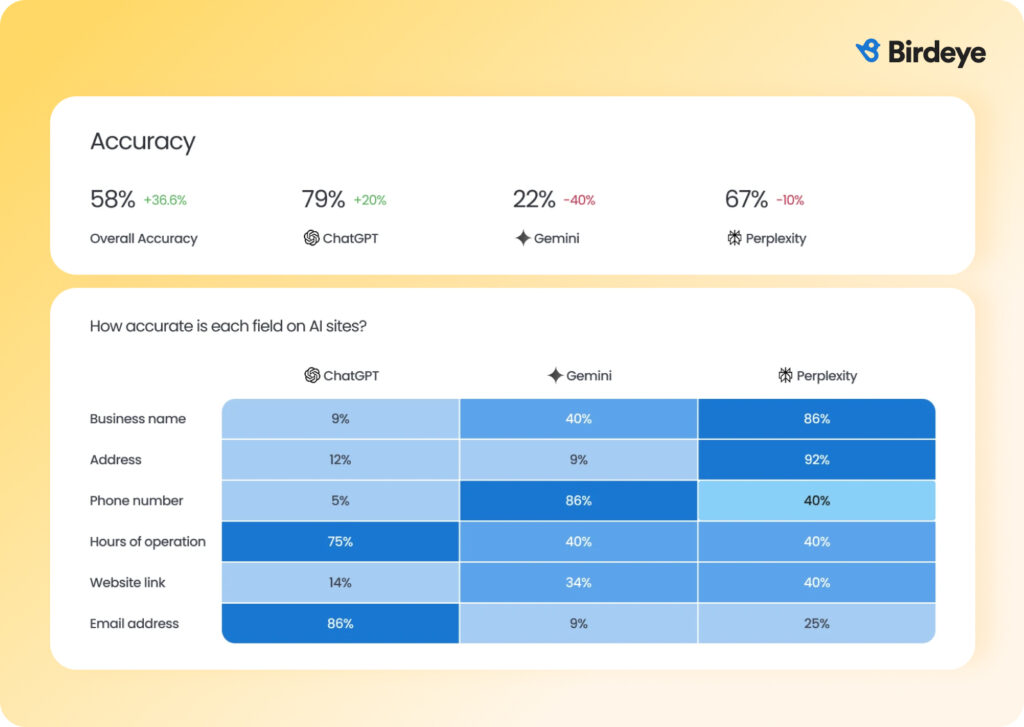

These platforms behave differently when you compare LLM answers vs traditional local search accuracy. Answers can vary by city, region, or service area depending on listing accuracy, review signals, local content quality, and industry-specific data sources. This is why enterprise teams must confirm which engines are tracked, how often prompts are re-run, and whether visibility data reflects real geographic differences. Without this validation, some markets remain unmonitored, even when dashboards appear complete.

With coverage defined, enterprises can now evaluate which platforms are best suited to manage AI visibility in 2026 across every location in their portfolio.

7 Best LLM visibility tracking tools for multi-location enterprises in 2026

The best LLM visibility tracking tools in 2026 include Birdeye Search AI, Scrunch, Profound, Nightwatch, Meltwater, Rankscale AI, Otterly AI, Peec AI, Ahrefs Brand Radar, and ZipTie. Teams use these platforms to monitor AI-generated answers, track citations, compare visibility across prompts and markets, and identify gaps in brand representation as part of broader generative engine optimization (GEO) efforts.

What is Generative Engine Optimization?

Generative Engine Optimization (GEO) is the practice of influencing how LLMs generate answers by improving the underlying signals they rely on, including listing accuracy, reviews, content, and source authority. GEO combines visibility tracking with execution to shape how brands are discovered and recommended.

Below is a closer look at how each LLM visibility tracking tool works and which teams they are best suited for.

1. Birdeye Search AI

Best fit: Enterprise and multi-location brands that want to manage AI visibility at scale by connecting insights to execution, with strong governance, automation, and control across 100-10,000+ locations.

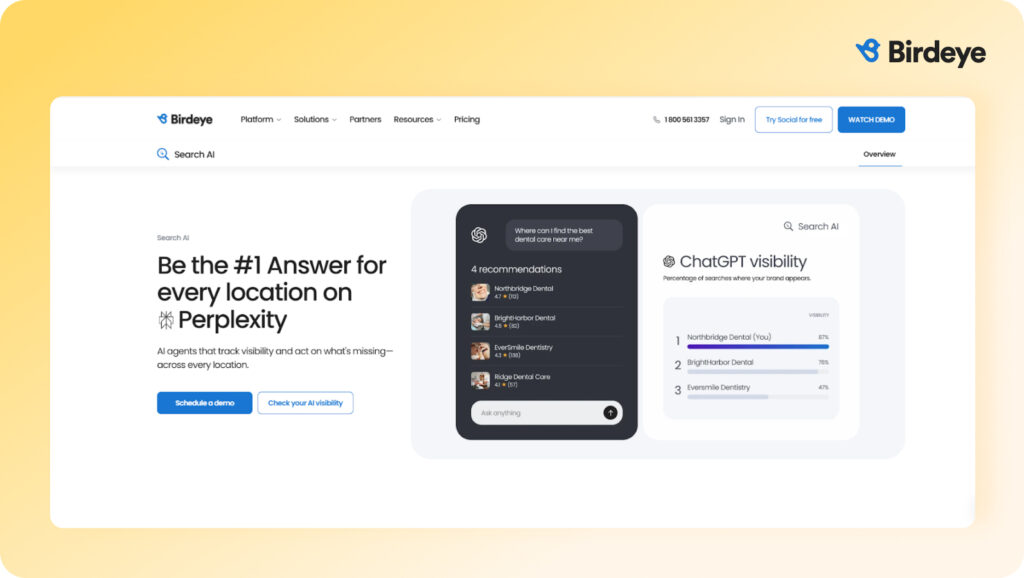

Birdeye Search AI is purpose-built for multi-location enterprises that need more than visibility reports. It helps teams understand how AI models like ChatGPT, Gemini, and Perplexity discover, describe, and recommend their brand and its various locations, and then act on those insights through automated execution.

Key capabilities

AI visibility insights

Search AI tracks how often your brand and individual locations appear in AI-generated answers. Teams can see visibility by prompt, theme, market, and location. This makes it easy to identify where locations lead, where they lag, and where they do not appear at all.

Competitive benchmarking

Enterprises can benchmark AI visibility against local and brand-level competitors across LLM platforms such as ChatGPT, Gemini, and Perplexity. This helps teams understand which brands LLMs recommend and how visibility compares across locations and markets.

Citation intelligence

Search AI reveals which websites, listings, and forums AI platforms rely on when generating answers. Teams can see whether AI is citing first-party brand pages or third-party sources and use these insights to guide content and optimization decisions.

Accuracy monitoring

Search AI tracks how accurately AI models present business information across locations. This includes hours, services, amenities, and other location attributes that influence trust and recommendations.

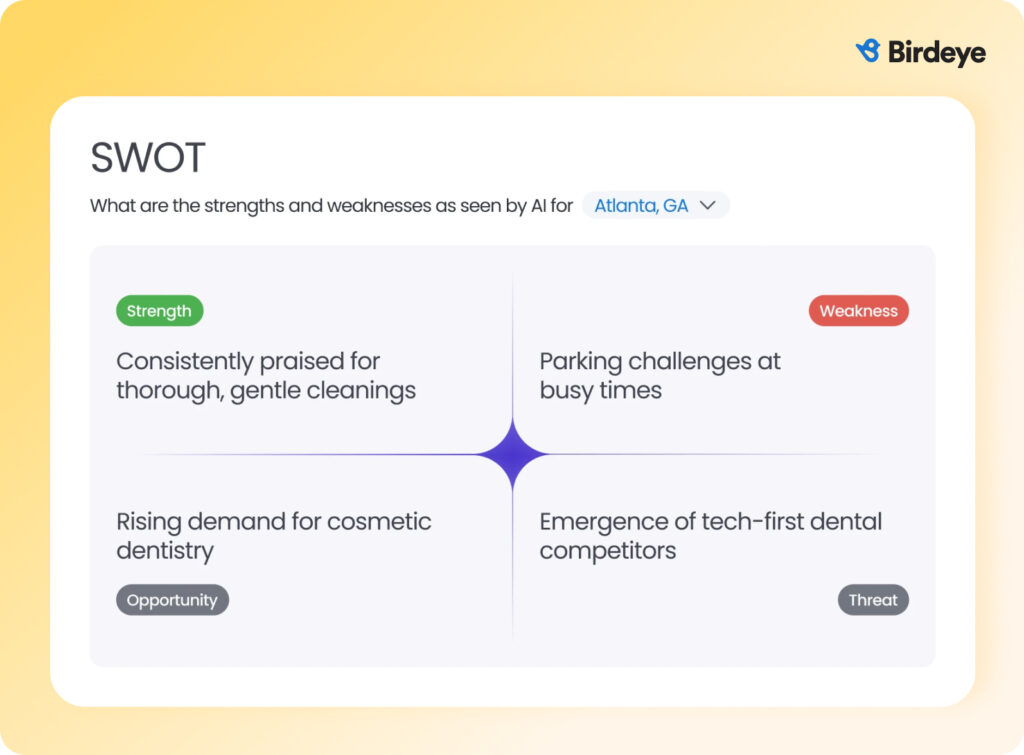

Sentiment and narrative analysis

Search AI analyzes how AI models describe your brand across locations. It surfaces sentiment, strengths, weaknesses, and recurring themes used in AI-generated answers, helping teams understand how perception varies by market and location.

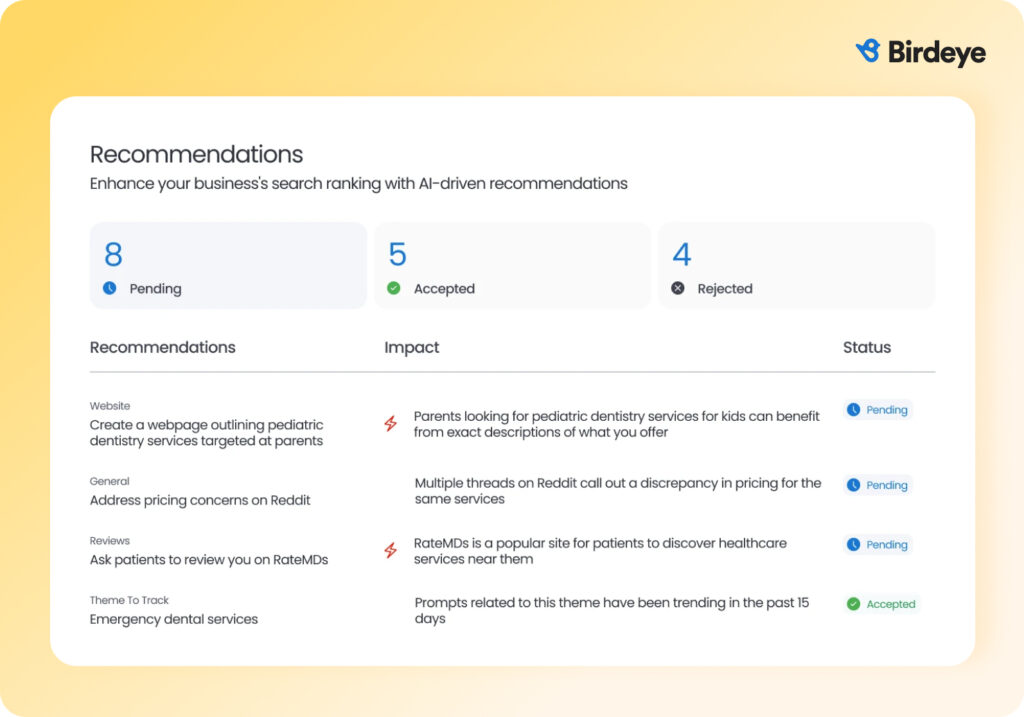

AI recommendations

Search AI recommends improvements and handles actions such as updating listings, generating reviews, and optimizing website content. This reduces manual effort and helps enterprises address AI visibility gaps at scale.

“Search AI is a great product. If I could give one piece of advice to multi-location brands, it would be to use Search AI sooner rather than later to get a leg-up on the competition.”

Neil Patel, Cofounder at NP Digital and Leading SEO Expert

Pros and cons of Birdeye Search AI

Pros

- Designed for operational complexity: Supports market-level reporting, governance, and workflows required by large, distributed teams.

- Reduces manual effort at scale: Automates updates to listings, reviews, and content based on LLM visibility insights.

- Strong governance and control: Role-based access, approvals, and audit trails make it well suited for large teams with compliance and accountability requirements.

- Broad AI engine coverage: Tracks brand and location visibility across major LLM answer platforms.

- Deep integrations and automation: Integrates with thousands of CRM, POS, and operational systems, enabling scalable automation.

Cons

- More comprehensive than teams with simple needs may require: Brands looking only for lightweight monitoring or basic mention tracking may find the platform more robust.

- Best value realized at enterprise scale: Birdeye Search AI is optimized for multi-location enterprises with 100-10,000+ locations, so smaller brands may not fully benefit from its governance and automation capabilities.

Why Birdeye stands out

Birdeye is the #1 Agentic Marketing Platform, trusted by the biggest brands globally. The platform supports market-level insights, location governance, and enterprise workflows, enabling consistent AI visibility across hundreds or thousands of locations.

These capabilities help enterprises move beyond tracking AI visibility to actively shaping how LLMs discover, trust, and recommend each location. Instead of treating AI visibility as a reporting exercise, Birdeye connects insights directly to the systems that govern the signals AI engines use to generate answers, allowing enterprises to resolve issues at scale and maintain accuracy as models evolve.

Enterprises choose Birdeye because it supports:

- Role-based access and approval workflows

- Cross-location governance and accountability

- Integrations with 3,000+ CRM, POS, and operational systems

2. Scrunch

Best fit: Teams that want to understand how LLMs interpret their content and why certain pages are surfaced in AI-generated answers, without needing execution or automation.

Scrunch is built for teams that want to understand how LLM models interpret, rank, and cite their content. Instead of treating AI visibility like traditional SEO, it shows how large language models actually read your site, which content they trust, and where things break. It’s strongest at turning AI answers into usable diagnostics.

Key capabilities

- Tracks brand presence, ranking, and citations across major AI engines

- Breaks visibility down by prompt, topic, persona, and geography

- Flags technical and content issues that limit AI understanding

- Provides enterprise-grade access controls and APIs for analysis and reporting

Pros and cons of Scrunch

Pros

- Helps teams understand how content is parsed, trusted, and cited by LLMs beyond traditional SEO signals.

- Useful for identifying structural or clarity issues that affect AI answer inclusion.

Cons

- Designed to surface why content performs the way it does, not to fix underlying data or listings.

- Best for centralized content/SEO teams rather than location-by-location governance.

- Insights typically need to be acted on through CMS, SEO, or operational platforms.

3. Profound

Best fit: Marketing and strategy teams that want analytical depth, competitive context, and insight into AI-driven demand.

Profound is designed for teams that want a market-level view of why LLM answers favor certain brands and content, and how that shifts over time. It connects prompts, citations, and competitive signals to help teams prioritize where to focus, especially on high-impact questions and themes.

Key capabilities

- Tracks brand visibility across major AI answer engines

- Identifies which sources and domains LLMs rely on for different topics

- Estimates prompt demand to identify high-impact questions

- Provides share of voice, sentiment, and competitive benchmarks

Pros and cons of Profound

Pros

- Useful for identifying high-impact prompts and themes to guide planning and resource allocation.

- Combines citations, sentiment, and share-of-voice to explain shifts in brand visibility.

Cons

- Designed to inform strategy rather than fix data, content, or listings.

- Better suited for strategy and growth teams than multi-location brands.

- Teams typically rely on other systems to implement changes informed by Profound’s insights.

4. Nightwatch

Best fit: SEO, marketing, and analytics teams seeking a single system for both classic and AI search. It’s ideal for teams that don’t want to add another platform just to track LLM visibility and are focused on monitoring, not execution.

Nightwatch is built for teams that already live in SEO data and want to extend that visibility into AI search. It connects traditional rankings with AI-generated answers so teams can see how performance shifts across both worlds in one place. Instead of replacing SEO workflows, it expands them.

Key capabilities

- Monitors brand visibility across traditional search results and AI-generated answers

- Maps keyword rankings to AI mentions and share of voice

- Surfaces high-level sentiment and narrative patterns in AI responses

- Identifies queries and prompts associated with visibility shifts over time

Pros and cons of Nightwatch

Pros

- Extends existing SEO reporting into AI visibility without requiring new processes or tooling.

- Useful for understanding how keyword performance influences AI answer inclusion.

Cons

- Does not provide workflows to correct business data or execute improvements.

- Not built to manage multi-location-specific accuracy, governance, or execution at scale.

- Best for directional insights rather than deep LLM-specific analysis.

5. Meltwater

Best fit: PR, communications, and brand teams that monitor public narrative, media coverage, and AI-driven brand perception.

Meltwater looks at AI visibility through the lens of brand reputation rather than search performance. It helps teams understand how their brand is referenced across AI-generated answers, news, and social channels, and how those narratives shift over time. Meltwater is most valuable when AI answers are part of broader communications, reputation, or risk-monitoring efforts.

Key capabilities

- Monitors brand mentions across AI-generated summaries, news, and social channels

- Tracks sentiment changes and emerging narratives over time

- Connects AI mentions to broader media and communications context

- Surfaces potential reputational risks and opportunities early

Pros and cons of Meltwater

Pros

- Helps teams understand how brand stories and themes propagate across media and AI-generated content.

- Useful for detecting sentiment shifts, crisis indicators, or emerging topics that may influence public perception.

Cons

- Designed to monitor perception rather than influence how AI answers are generated.

- Lacks workflows for managing business data, listings, or local accuracy.

- Insights are typically acted on via communications teams and external processes.

6. Rankscale

Best fit: Marketing teams, agencies, and SMBs that want a simple way to track AI-answer visibility over time without complex setup or tooling.

Rankscale is built to give teams a quick, consistent view of where brands and pages appear in AI-generated answers. Rather than analyzing why visibility changes or how to fix issues, it focuses on turning AI mentions into clear scores and comparisons that are easy to track, report, and share, especially for ongoing monitoring or client reporting.

Key capabilities:

- Tracks where brands and pages appear in AI answers

- Captures citations and prompt-level responses for review

- Compares visibility against competitors

- Exports data easily for reporting and analysis

Pros and cons of Ranckscale

Pros

- Makes it easy to see whether and where a brand appears in AI answers.

- Simple scoring and comparisons work well for trend tracking and external reporting.

Cons

- Prioritizes simplicity over deeper analysis of LLM behavior or root causes.

- Lacks controls and structure for location-by-location governance.

- Improvements typically require manual work or other platforms.

7. Otterly AI

Best fit: Small teams, agencies, or brands that want a simple way to check whether they appear in AI-generated answers.

Otterly AI is built for teams that want a quick, easy way to see whether their brand appears in AI responses. It removes complexity and focuses on basic visibility, prompts, and reports. Think of it as a starting point for AI search tracking.

Key capabilities

- Monitors brand mentions and citations across AI search engines

- Turns keywords into trackable AI prompts

- Shows visibility trends in simple dashboards

- Helps teams spot gaps in content or sources

Pros and cons of Otterly AI

Pros

- Simple setup makes it easy to start tracking AI mentions with minimal effort.

- Budget-friendly entry point for small teams exploring AI visibility.

Cons

- Does not indicate which prompts or gaps matter most from a business-impact perspective.

- Does not support multi-location governance or complex organizational needs.

- Not designed for deep longitudinal analysis or forecasting trends over time.

8. Peec AI

Best fit: Teams that want to explore how their content competes in AI-generated answers without committing to complex tooling.

Peec AI helps teams monitor how their brand and content appear in AI-generated answers. It focuses on prompt-level visibility, competitive comparisons, and trend tracking, making it useful for basic analysis and content gap identification rather than execution or large-scale operations.

Key capabilities

- Tracks brand and content visibility across AI answer surfaces

- Allows prompt and query-level performance review

- Provides insight into competitive AI visibility signals

- Offers simple dashboards for trend analysis

Pros and cons of Peec AI

Pros

- Simple setup and navigation for first-time AI visibility tracking.

- Helps teams understand which questions and topics trigger competitor visibility more often.

Cons

- Built to surface patterns and comparisons rather than guide optimization or execution.

- Not intended for multi-location governance or enterprise-wide programs.

- Best used for early insight and hypothesis generation, not comprehensive AI visibility management.

9. Ahrefs Brand Radar

Best fit: SEO and brand teams that want to understand brand demand, authority signals, and visibility momentum across search and AI-adjacent surfaces.

Ahrefs Brand Radar is built to show how brand interest and authority change over time by analyzing brand mentions, branded queries, and link-related signals across Ahrefs’ index. Rather than tracking AI answers directly, it helps teams understand whether brand strength, awareness, and topical authority are increasing in ways that influence both search visibility and AI-generated references.

Key capabilities

- Analyzes branded search demand and brand growth trends

- Tracks changes in brand-related visibility over time

- Connects brand presence to link-based authority signals

- Highlights competitive shifts in brand interest and awareness

Pros and cons of Ahrefs Brand Radar

Pros

- Useful for understanding whether brand awareness and demand are rising or declining over time.

- Helps teams assess how brand strength and credibility compare to competitors within a category.

Cons

- Measures brand strength signals rather than how brands appear in specific AI-generated responses.

- Does not show which questions or topics trigger brand inclusion in AI answers.

- Insights are primarily strategic and must be acted on through other platforms.

10. Ziptie

Best fit: Marketing analysts and growth teams that want hands-on experimentation and qualitative insight into how AI answers reference brands.

ZipTie helps teams monitor brand mentions, citations, and sentiment across AI platforms. It’s designed for visibility tracking and insight generation, making it useful for understanding patterns in AI answers rather than managing execution or large-scale operations.

Key capabilities

- Runs and compares custom prompts across AI answer engines

- Captures full AI responses for side-by-side qualitative review

- Analyzes how phrasing and intent affect brand inclusion

- Supports manual tagging and analyst-led insight workflows

Pros and cons of ZipTie

Pros

- Well suited for testing how different prompt structures and intents influence AI answers.

- Appeals to teams that prefer hands-on exploration over predefined reporting frameworks.

Cons

- Does not offer integrated workflows for fixing listings, data errors, or local content at scale.

- Lacks controls and workflows designed for distributed, multi-location enterprise environments.

- Relies on manual review and interpretation rather than automated insight generation.

Looking across these tools, a clear distinction emerges in how LLM visibility is approached. Many platforms help brands understand how they appear in AI-generated answers, but stop short of helping teams improve those outcomes across locations. Birdeye goes further by connecting LLM visibility insights directly to the systems that manage listings, reviews, and content, making it easier for multi-location enterprises to turn visibility into consistent, scalable results.

FAQs about LLM visibility tracking tools

LLM visibility tracking tools help organizations understand and monitor how their brand appears in AI-generated answers across platforms such as ChatGPT, Gemini, Perplexity, and Google AI Overviews. Some platforms extend this visibility into execution and governance, while others focus primarily on analysis, diagnostics, or monitoring.

SEO tracking focuses on rankings, clicks, and traffic from traditional search results. LLM visibility tracking focuses on whether a brand appears in AI-generated answers, how it is described, and which sources AI models trust.

Enterprises should start with high-intent prompts, first-party citation coverage, and location data accuracy. These areas usually create the fastest improvements in AI visibility.

AI models weigh trust signals such as accurate listings, review volume and sentiment, source authority, and consistency across the web. Brands with clean, consistent data are more likely to be cited and recommended. Birdeye helps enterprises strengthen these signals by keeping listings accurate, reviews fresh, and content consistent across every location.

Yes, when visibility insights are connected to systems that manage listings, reviews, and content. This is where Birdeye’s AI agents matter. They turn visibility findings into actions like updating business information, generating reviews, and optimizing local content without manual work.

Reviews are a major trust signal for AI models. They influence whether a brand is recommended, how it’s described, and which locations are surfaced in responses. With Birdeye Reviews AI, enterprises can generate, monitor, and respond to reviews, improving the trust signals LLMs use when producing answers.

Turning LLM visibility into an enterprise advantage with Birdeye

AI-generated answers already influence how customers choose businesses. The brands that succeed are not the ones that only track AI visibility, but those that can consistently improve it across all locations.

When enterprise teams examine AI visibility, they often uncover real issues. Locations may display incorrect hours. Competitors may be recommended instead. AI models may cite third-party directories rather than first-party brand pages. Identifying these problems is only the first step. The real challenge is fixing them everywhere in a controlled, scalable way without adding manual work.

This is where Birdeye stands apart. Birdeye acts as both the system of record and the system of action for online discovery. It brings together listings, reviews, content, and customer signals on a single platform and connects AI visibility insights directly to execution. With Birdeye’s AI agents, enterprises can keep business data accurate, strengthen trust signals, and improve how LLMs automatically describe and recommend each location.

See how leading enterprises use Birdeye Search AI to stay accurate, trusted, and visible across AI-driven search experiences. Watch the free demo and explore Search AI in action.

Originally published

![[Feature image] The Best Marketing Platforms for Multi-Location Brands](https://birdeye.com/blog/wp-content/uploads/Feature-image-The-Best-Marketing-Platforms-for-Multi-Location-Brands-375x195.png)